Troubleshooting

NAbox Architecture

Most of NAbox components are delivered as containers inside the virtual appliance.

Containers are managed by docker-compose, you can find the files for the docker-compose environment in /usr/local/nabox/docker-compose/.

| File | Description |

|---|---|

docker-compose.yaml |

Main configuration file, it should not be modified because it will be overwritten with each update |

docker-compose.override.yaml |

Override file, you can customize docker-compose environement by making changes here |

.env |

Environment file, some critical environment variables are defined here |

You can interact with the compose stack with the dc command line, which is an alias to docker-compose with the right parameters.

nabox:~# dc ps

Name Command State Ports

----------------------------------------------------------------------------------------------------------------------------------------------------------------------

cadvisor /usr/bin/cadvisor -logtostderr Up (healthy) 8080/tcp

go-carbon /init/run.sh Up (healthy) 2003/tcp, 2003/udp, 2004/tcp, 7002/tcp, 7003/tcp, 7007/tcp, 8080/tcp

grafana /run.sh Up 3000/tcp

graphite /bin/sh -c /run.sh Up 80/tcp

nabox-admin /docker-entrypoint.sh ngin ... Up 80/tcp

nabox-api python api.py Up 5000/tcp

nabox-harvest python3 harvest.py Up 5000/tcp

nabox-harvest2 python3 harvest.py Up 5000/tcp

node-exporter /bin/node_exporter --path. ... Up 9100/tcp

prometheus /bin/prometheus --config.f ... Up 9090/tcp

traefik /entrypoint.sh --api.insec ... Up 0.0.0.0:2003->2003/tcp,:::2003->2003/tcp, 0.0.0.0:443->443/tcp,:::443->443/tcp,

nabox-api:~# dc logs -f --tail 10 nabox-api

Attaching to nabox-api

nabox-api | INFO:werkzeug:172.99.0.9 - - [01/Apr/2022 10:01:14] "GET /api/1.0/system/disks HTTP/1.1" 200 -

nabox-api | 172.99.0.9 - - [01/Apr/2022 10:01:19] "GET /api/1.0/dashboards/nullpointmode HTTP/1.1" 200 -

nabox-api | INFO:werkzeug:172.99.0.9 - - [01/Apr/2022 10:01:19] "GET /api/1.0/dashboards/nullpointmode HTTP/1.1" 200 -

nabox-api | 172.99.0.9 - - [01/Apr/2022 10:01:27] "GET /api/1.0/system/upgrade HTTP/1.1" 200 -

nabox-api | INFO:werkzeug:172.99.0.9 - - [01/Apr/2022 10:01:27] "GET /api/1.0/system/upgrade HTTP/1.1" 200 -

nabox-api | 172.99.0.9 - - [01/Apr/2022 10:01:27] "GET /api/1.0/system/version HTTP/1.1" 200 -

nabox-api | INFO:werkzeug:172.99.0.9 - - [01/Apr/2022 10:01:27] "GET /api/1.0/system/version HTTP/1.1" 200 -

nabox-api | WARNING:root:{'text': 'harvest version 22.02.0-4 (commit 9484df6) (build date 2022-02-15T09:11:03-0500) linux/amd64', 'version': '22.02.0-4'}

nabox-api | 172.99.0.9 - - [01/Apr/2022 10:01:27] "GET /api/1.0/system/packages HTTP/1.1" 200 -

nabox-api | INFO:werkzeug:172.99.0.9 - - [01/Apr/2022 10:01:27] "GET /api/1.0/system/packages HTTP/1.1" 200 -

Containers

The following containers are deployed with NAbox :

| Container | Description |

|---|---|

cadvisor |

Exports containers usage statistics for NAbox dashboard |

go-carbon |

Ingests metrics coming from NetApp Harvest 1.x |

grafana |

Grafana Dashboards |

graphite |

Presents metrics coming from NetApp Harvest 1.x to Grafana |

nabox-admin |

Web interface |

nabox-api |

NAbox API server |

nabox-harvest |

NetApp Harvest 1.x |

nabox-harvest2 |

NetApp Harvest 2.x |

node-exporter |

Exports NAbox host usage statistics for NAbox dashboard |

prometheus |

Prometheus stores metrics coming from NetApp Harvest 2.x |

traefik |

Routes HTTPS trafic to different containers |

Collecting logs

Most issues can be troubleshoot by looking at logs for nabox-api and nabox-harvest2 containers :

nabox:~# dc logs -f --tail 10 nabox-api

Attaching to nabox-api

nabox-api | INFO:werkzeug:172.99.0.9 - - [01/Apr/2022 10:01:14] "GET /api/1.0/system/disks HTTP/1.1" 200 -

nabox-api | 172.99.0.9 - - [01/Apr/2022 10:01:19] "GET /api/1.0/dashboards/nullpointmode HTTP/1.1" 200 -

nabox-api | INFO:werkzeug:172.99.0.9 - - [01/Apr/2022 10:01:19] "GET /api/1.0/dashboards/nullpointmode HTTP/1.1" 200 -

nabox-api | 172.99.0.9 - - [01/Apr/2022 10:01:27] "GET /api/1.0/system/upgrade HTTP/1.1" 200 -

nabox-api | INFO:werkzeug:172.99.0.9 - - [01/Apr/2022 10:01:27] "GET /api/1.0/system/upgrade HTTP/1.1" 200 -

nabox-api | 172.99.0.9 - - [01/Apr/2022 10:01:27] "GET /api/1.0/system/version HTTP/1.1" 200 -

nabox-api | INFO:werkzeug:172.99.0.9 - - [01/Apr/2022 10:01:27] "GET /api/1.0/system/version HTTP/1.1" 200 -

nabox-api | WARNING:root:{'text': 'harvest version 22.02.0-4 (commit 9484df6) (build date 2022-02-15T09:11:03-0500) linux/amd64', 'version': '22.02.0-4'}

nabox-api | 172.99.0.9 - - [01/Apr/2022 10:01:27] "GET /api/1.0/system/packages HTTP/1.1" 200 -

nabox-api | INFO:werkzeug:172.99.0.9 - - [01/Apr/2022 10:01:27] "GET /api/1.0/system/packages HTTP/1.1" 200 -

nabox:~# dc logs -f --tail 10 nabox-harvest2

Attaching to nabox-harvest2

nabox-harvest2 | 8:05AM WRN goharvest2/cmd/collectors/zapi/plugins/quota/quota.go:180 > no [files-used-pct-file-limit] instances on this SVM_NAS.fg_oss_1605688496.bucket03 Poller=AFF plugin=Zapi:Qtree

nabox-harvest2 | 8:05AM WRN goharvest2/cmd/collectors/zapi/plugins/quota/quota.go:180 > no [files-used-pct-soft-file-limit] instances on this SVM_NAS.fg_oss_1605688496. Poller=AFF plugin=Zapi:Qtree

nabox-harvest2 | 8:05AM WRN goharvest2/cmd/collectors/zapi/plugins/quota/quota.go:180 > no [disk-used-pct-disk-limit] instances on this SVM_NAS.fg_oss_1605688496. Poller=AFF plugin=Zapi:Qtree

nabox-harvest2 | 8:05AM WRN goharvest2/cmd/collectors/zapi/plugins/quota/quota.go:180 > no [disk-used-pct-soft-disk-limit] instances on this SVM_NAS.fg_oss_1605688496. Poller=AFF plugin=Zapi:Qtree

nabox-harvest2 | 8:05AM WRN goharvest2/cmd/collectors/zapi/plugins/quota/quota.go:180 > no [disk-used-pct-threshold] instances on this SVM_NAS.fg_oss_1605688496. Poller=AFF plugin=Zapi:Qtree

nabox-harvest2 | 8:05AM WRN goharvest2/cmd/collectors/zapi/plugins/quota/quota.go:180 > no [files-used-pct-file-limit] instances on this SVM_NAS.fg_oss_1605688496. Poller=AFF plugin=Zapi:Qtree

nabox-harvest2 | 8:05AM WRN goharvest2/cmd/collectors/zapi/plugins/quota/quota.go:180 > no [files-used-pct-soft-file-limit] instances on this NAS_SVM_MC1.VOL_DEMO_CIFS.qtree1 Poller=ClusterA plugin=Zapi:Qtree

nabox-harvest2 | 8:05AM WRN goharvest2/cmd/collectors/zapi/plugins/quota/quota.go:180 > no [disk-used-pct-soft-disk-limit] instances on this NAS_SVM_MC1.VOL_DEMO_CIFS.qtree1 Poller=ClusterA plugin=Zapi:Qtree

nabox-harvest2 | 8:05AM WRN goharvest2/cmd/collectors/zapi/plugins/quota/quota.go:180 > no [disk-used-pct-threshold] instances on this NAS_SVM_MC1.VOL_DEMO_CIFS.qtree1 Poller=ClusterA plugin=Zapi:Qtree

nabox-harvest2 | 8:05AM ERR goharvest2/cmd/poller/collector/collector.go:375 > plugin [Qtree]: error="no instances => no quota instances found" Poller=ClusterB collector=Zapi:Qtree stack=[{"func":"New","line":"35","source":"errors.go"},{"func":"(*Quota).Run","line":"156","source":"quota.go"},{"func":"(*AbstractCollector).Start","line":"374","source":"collector.go"},{"func":"goexit","line":"1581","source":"asm_amd64.s"}]

You can save those logs in a file and create an archive like so :

dc logs nabox-api > nabox-api.log; dc logs nabox-harvest2 > nabox-harvest2.log;\

tar -czf nabox-logs-`date +%Y-%m-%d_%H:%M:%S`.tgz *

Collecting Harvest Configuration

To collect a redacted copy of harvest.yml, run the following command :

dc exec -w /conf nabox-harvest2 /netapp-harvest/bin/harvest doctor --print

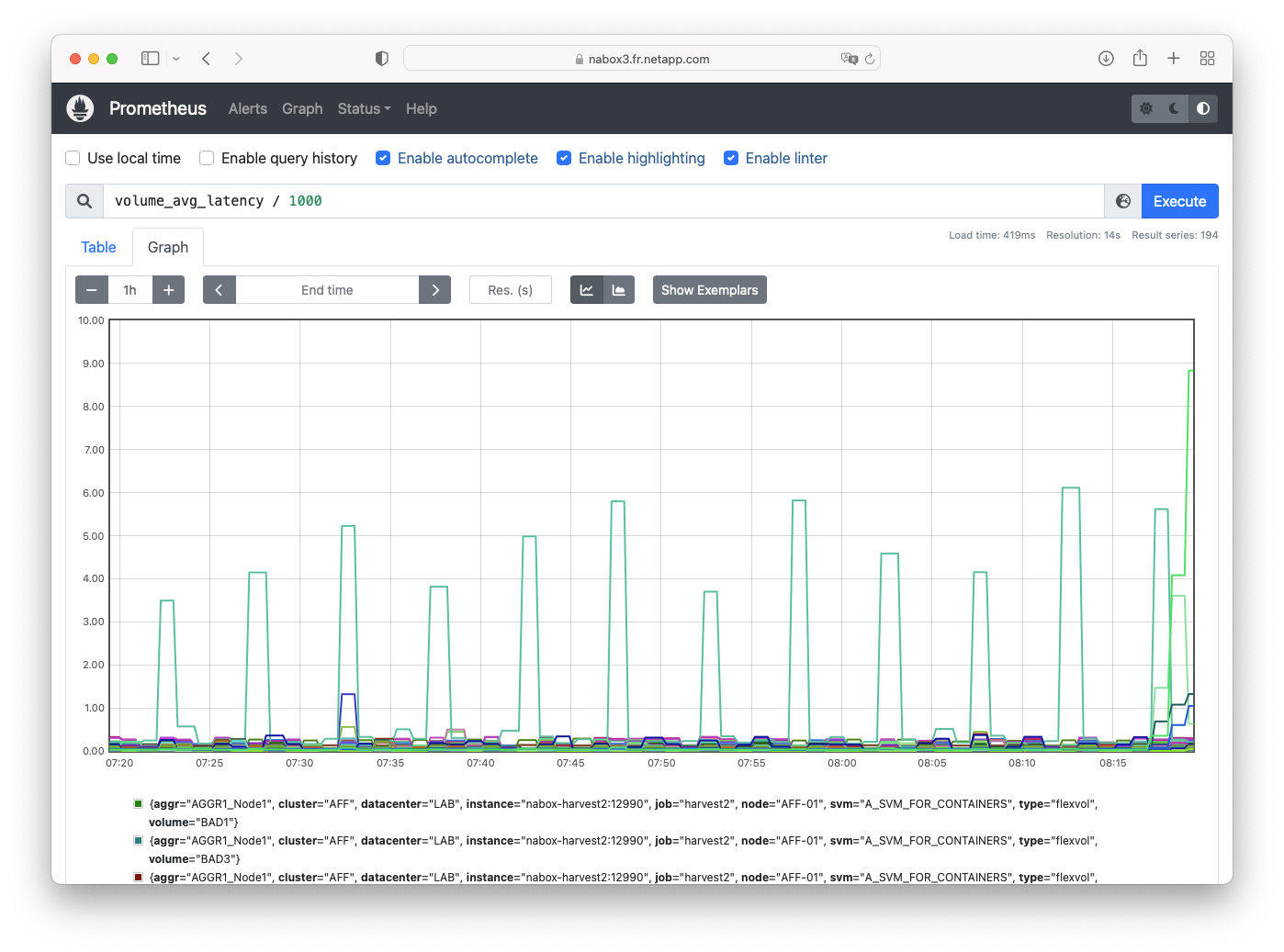

Using Prometheus and Graphite

Both Prometheus and Graphite are exposed to the outside so you can browse metrics and test queries.

Point your browser to https://<nabox ip>/prometheus/ or https://<nabox ip>/graphite/ accordingly

Configuration files

NAbox stores most configuration files in /opt/ directory :

| Directory | Description |

|---|---|

harvest-conf |

Configuration file for Harvest 1.x (Deprecated) |

harvest2-conf |

Harvest 2.x configuration directory. |

secrets |

Various tokens for inter-containers communication and Certification Authorities |

ssl |

Traefik reverse proxy configuration and certificate files |

harvest-conf

In this directory you will find the main configuration file for Harvest. Normally, this file is only managed by NAbox Web UI and shouldn't be modified externally.

It is possible though, to copy this file from one NAbox to another when

migrating, or generate this file with external script, as long as you take

extra care putting all the required properties for a poller to function

properly. Specifically, prometheus_port is required and must

be unique across that file.

When you modify that file, you should see the changes reported in the Web UI.

Note

In the Web UI, only systems having Zapi, ZapiPerf or

StorageGrid collectors will be displayed.

By convention, internal pollers starting with _ like _unix are not

visible.

secrets

The secrets directory maintains the tokens used for communication between the

different products in NAbox

| File | Description |

|---|---|

grafana |

Grafana API token used when adding datasources or customizing dashboards properties from Web UI |

harvest-api |

Harvest REST frontend authentication token (Documentation on https://<nabox ip>/harvest2/1.0/ui/) |

harvest-grafana |

Grafana API token used when injecting dashboards during Harvest 2 installation or dashboard reset from Web UI |

jwt-secret |

JWT seed, unique for each NAbox deployment, used to generate Web UI session token |

nabox-api |

NAbox REST frontend API token, you can also use NAbox admin user to authenticate (Documentation on https://<nabox ip>/api/1.0/ui/) |

ssl

The ssl directory contains certificate files for NAbox web server.

When generating a Certificate Signing Request from REST API, it will also

contain the .csr file.

Feel free to copy your own private key and public certificates respectively as

nabox.key and nabox.crt and restart traefik with dc restart traefik.

| File | Description |

|---|---|

nabox.crt |

Public certificate for NAbox web server |

nabox.csr |

Certificate Signing Request, if there is one pending. CSR can be generate through REST interface. |

nabox.key |

Private key for NAbox web server |

traefik.yaml |

Traefik reverse proxy configuration file |